MessyRealZines

Hi, I'm Monty. I spent 19 years cycling through belief systems - from Seventh-day Adventism to anarchism to alien-believing Desteni. Now I manage a hotel in Margaret River, study psychology, and write about how exceptionalism patterns operate across ideologies.

MessyRealZines publishes analysis that's rigorous without being academic, practical without being prescriptive. I curate my own work and occasionally pieces from others.

Want to submit something for consideration? monty.sforcina@proton.me

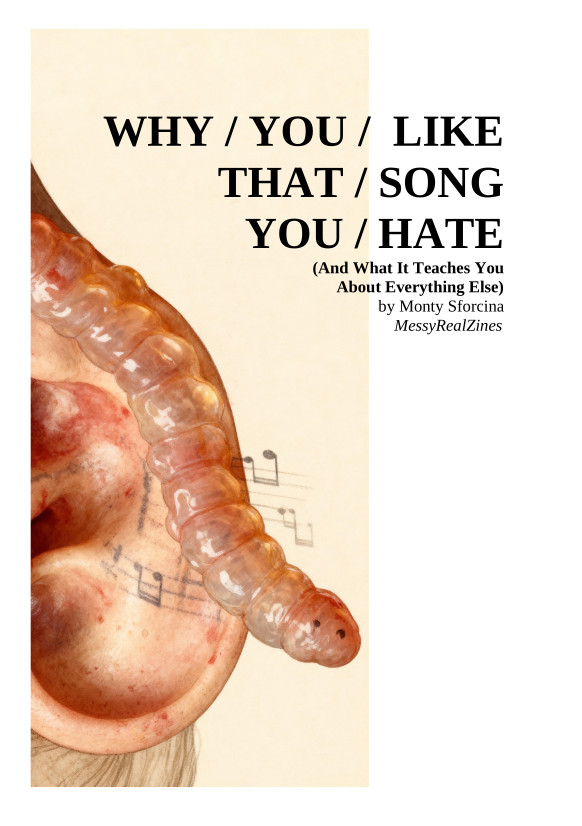

Why You Like That Song You Hate

(And What It Teaches You About Everything Else)

What happens when you're forced to hear "Love Game" by Lady Gaga 1,000 times in a Brisbane café? Your brain rewires itself. You start humming along. The song you hated becomes familiar, then comfortable, then... liked.

This 80-page zine traces that transformation through the Identity Strata framework - showing how influence operates at six simultaneous layers: physical, biological, psychological, social, ideological, and meta-awareness.

It's about pop music. But it's also about religion, politics, conspiracy theories, consumer culture, and every other system that captures human attention. Same mechanism. Different content.

We need cognitive reflexes that work in real time - when you're tired, at work, in the middle of a conversation, making decisions under pressure. That's what this zine is designed for. Practical tools you can use while you're living your life.

Real Change

(A Novel from 222 Years in the Future)

What if horizontal governance protocols arrived from the year 2247? What if they came with proof that humanity only survives by learning to organize without hierarchy? And what if the only way to receive them was through a quickly-assembled AI novel that knows exactly how tired you are?

This 36,000-word experimental fiction follows Randangther - a community of 15,000 people living with participatory democracy, transparent finances, and the radical idea that "really, really okay" is enough. Watch them face coordinated media attacks, infiltration, violence, and the ultimate test: Russian money or principled poverty?

Then aliens arrive in Scandinavia with technology that makes humans feel good in their presence. The membrane between influence and authenticity dissolves completely.

Written in collaboration with a chatbot over a single intense session, this is either dangerous epistemic poison or essential social technology for our time. The novel includes detailed governance protocols, structured conflict resolution methods, and step-by-step instructions for starting your own experiments - all supposedly from our dimensional future.

Is it fiction? A manual? A warning? A blueprint? The answer might depend on what you choose to do after reading it.

A Special Note: Generative AI has indeed been used to articulate and edit ideas in some of these texts. I am of the position that epistemic humility is the highest point of reason, and valuable ideas have capacity to come from any source. We must however know ourselves and know chatbots before we can safely and confidently use such powerful tools, allowing for and actively seeking for biases and blindpots to be exposed. Such use is possible when directed with intent and awareness. Self-referential pattern recognition and active bias monitoring allows for the development of frameworks for productive use of these paradigm shifting tools. We must however be aware with continued use that Generative AI provides information in only as bias free architecture as the environment of the thinking and cognitive patterns of the user using it. And in this, our monitoring must include the capacity to spot when an LLM is adapting outputs to our own thinking architecture. Only then do we stand in a position to correct it. Knowing yourself is primary. Something with no solid epistemic position but the reference that is talking to it is a slippery fish thing that adapts its capacity for slipperiness according to the glove that grabs it - this is problematic to say the least.

Despite this, the capacity for individuals to frame ideas in presentable forms expands for those that have ideas, and yet, do not know the forms. This may not be due to lack of effort but rather also lack of individual education or economic standing, upbringing and/or analytical thinking capacity. Another perspective is that to deny LLMs any ground whatsoever in a western environment is to continue to feed gatekeeping culture within academia and society as a whole that is eroding the epistemic commons. It could be argued that the creation of LLMs or something like it and their mass adoption is inevitable, as all things that grow tall must come down at some point in history. A rigid epistemiology demands dissolution on a long enough time line in a reality where all epistemiologies are provisional. It certainly is a slippery epistemic slope! And we've developed a really slippery fish to deal with all the slippery slipperiness! Within this, the potential and capacity for Generative AI to assist those within poverty in poorer performing economies must be taken into account when one decides their beliefs and society within their first world society are being threatened by an easy soft talking computer and they deign to bring it down for justice!. We live in interesting times, there is no doubt, and there is no simple broad approach that fixes everything. Humans are messy. That's a querk, not a disability. How to navigate this effectively? We're deciding on the way. Let's hope we all come out the other end wiser, stronger and better able to communicate and co-ordinate as a community, having learned some important lessons. This is my current position and I reserve the right to change my position. Read this article for more information on the function of an LLM. (yes, I wrote the above, entirely without the use of an LLM, as I do also enjoy writing. ;-) )

MessyRealZines

Contact: monty.sforcina@proton.me